A due diligence process lands, or an enterprise customer asks their first hard security question. Hopefully, this happens before data actually walks out the door. Yet, there it sits: a third-party Docker Hub image running in production. The same story plays out on most local dev machines.

Most teams respond in one of two ways. They either pay for Chainguard or Docker Hardened Images on a team plan, which usually sits outside the budget of a 30-person shop. Alternatively, they shrug and promise to look at it later, claiming it isn't critical.

You have another option. Build and host the container yourself on your own terms. It requires real work but delivers the exact artifacts an auditor will sign off on.

"AI ships the bugs. AI ships the exploits. The number of projects sitting on critical CVEs is climbing fast, and protecting yourself is no longer optional."

Why Build Your Own Container Pipeline: The transfer.sh Abandonment Story

We needed a small tool to move files from A to B without exposing them to the public internet. A self-hosted, secure replacement for WeTransfer. Our AI advisor suggested dutchcoders/transfer.sh. It is a popular self-hosted file-sharing tool in the Go ecosystem and seemed like the perfect fit. It offered simple uploads and downloads without a UI or complex authentication schemes. A minimal feature set usually means a minimal attack surface. It was exactly the shape of tool we were after.

That is also the trap. Many teams blindly follow this advice: "transfer.sh is popular, here's the docker-compose, ship it." That would be fatal.

First scan: 4 CRITICAL and 16 HIGH in the Go dependencies. The CVEs were months old. The upstream repo had seen no commits since 2023. The project had quietly died, yet Docker Hub was happily serving the corpse.

We looked for a maintained fork but found nothing active enough for production. We switched to Forceu/Gokapi, an actively maintained Firefox-Send-style alternative in Go. Gokapi is more tool than we strictly need. However, rolling our own minimal replacement isn't on the cards. Building one with AI takes a weekend; maintaining it takes years. Those are two different jobs.

This failure mode makes a casual docker pull dangerous in production. A widely-used tool drifts from actively maintained to effectively abandoned with no announcement. Docker Hub keeps serving it. Your cluster keeps pulling it. CVEs accumulate invisibly.

The point isn't how many CVEs transfer.sh accumulated. The point is that we look every single time. The pipeline below makes that sustainable.

Three Controls for a Secure Docker Supply Chain: Build, Scan, Harden

Build a secure supply chain without expensive enterprise tools, you must implement three non-negotiable controls::

- Build it yourself. No Docker Hub pulls in production. You must own the registry and the build logs.

- Scan and gate. Every build must ship an SBOM along with a scanner report and a human-readable diff against upstream. Any critical CVEs block the push.

- Harden the base. Stop relying on Alpine or Debian-slim with their monthly CVE churn. Rebase onto a minimal base, distroless by default, so your runtime attack surface shrinks to roughly what your binary actually uses.

These steps form a supply-chain control story that survives due diligence. Skip the base hardening, and Trivy reports become a monthly waiver-filing ritual against upstream Alpine CVEs. That is how scanning every image degrades into scanning and ignoring every image.

Daily Operations: How Automated CVE Scanning Looks in Practice

10 AM. Second coffee. The pipeline finished while we were asleep. A notification is waiting in the inbox:

(A) "Gokapi v2.2.4 → v2.2.5. Trivy clean, SBOM updated. PR ready." You skim the diff, approve, and merge. ArgoCD picks up the new digest.

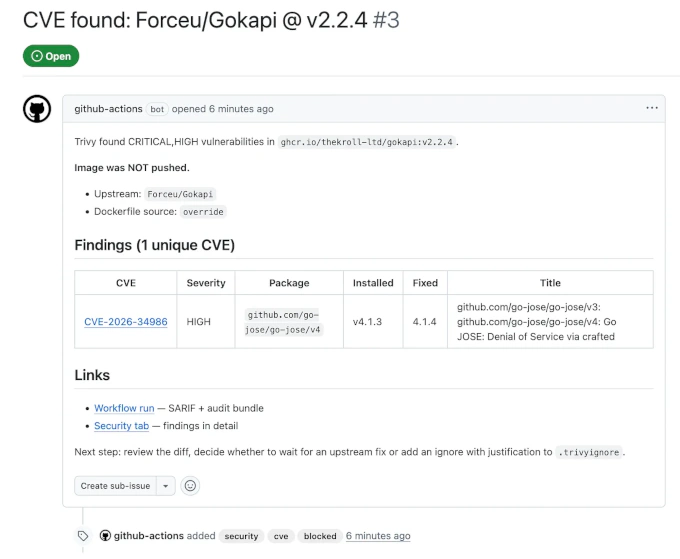

(B) "Gokapi v2.2.5 build BLOCKED. HIGH CVE in a dependency. Image NOT pushed. Issue opened." You open the issue, read the Trivy output, and make a decision. You can wait for an upstream fix or add a documented waiver to .trivyignore.

Either way, nothing unreviewed lands in your cluster. The build either waits clean or blocks with documentation. It costs roughly zero extra minutes a day once it's running.

What This CVE Pipeline Catches — and What It Doesn't

It catches registry-side image tampering since you pull from your own GHCR, along with missing CVE visibility at deploy time. It also handles the base-image CVE backlog and insulates you from Docker Hub outages. Crucially, we learned it catches abandoned upstreams that quietly stopped shipping patches. It provides the exact artifacts an auditor wants, including SBOMs and scan reports per upstream bump.

It catches CVEs published after release. The main build job scans at build time. A nightly rescan job then hits the last-built image against the current Trivy database without rebuilding. If a CVE lands tomorrow against a library inside yesterday's deployed image, the rescan surfaces it and auto-files an issue. You decide manually whether to wait for upstream or roll a dependency pin into your override. The pipeline never silently ships new digests. This covers the gap release-driven pipelines have by default.

It doesn't catch a sophisticated source-code backdoor from a compromised upstream maintainer like the xz scenario. No scanner catches that cleanly, and anyone selling you otherwise is overclaiming. What the pipeline does give you for that threat class is a documented diff-review artifact at every upstream bump. A human reviews it before deployment. This is the same control a well-run enterprise applies. It is defensible in an audit, which is more than most SMBs have today.

The whole problem stays invisible until the first due diligence questionnaire drags it into the open.

Setting Up Your Trivy + Distroless Pipeline in GitHub Actions

The 5-Minute Setup: Fork, Configure, Ship

Here is how you stand up your own mirror in four steps.

1. Fork the template. Go to https://github.com/THEKROLL-LTD/oss-mirror-build and click "Use this template". You now own a copy.

2. Set two env vars. Open .github/workflows/build.yml and edit the env block at the top:

env:

UPSTREAM_REPO: "Forceu/Gokapi"

IMAGE_NAME: "ghcr.io/your-org/gokapi"

3. Drop in your hardened Dockerfile. Save it at dockerfiles/Dockerfile.override. The Gokapi-on-distroless example sits in the next section for you to copy and adjust for your upstream.

4. Commit and push. The nightly cron handles the rest.

From here on, the workflow runs every night:

- Check for a new upstream tag. If nothing changed, it exits.

- Clone upstream at the new tag. This requires zero fork maintenance since the source is unchanged.

- Apply your Dockerfile override. If

dockerfiles/Dockerfile.overrideexists, it replaces the upstream version, allowing you to rebase onto distroless. - Emit a diff-review artifact. This includes the commit log and full patch between the last built tag and the new one with 90-day retention.

- Build the image once and load it locally.

- Scan with Trivy. It generates a SARIF for the Security tab and a CycloneDX SBOM. CRITICAL or HIGH findings with an available fix block the push.

- Pass or block. If blocked, it auto-files an issue. If passed, it pushes to GHCR and opens a PR against main with the new digest pin. After you review and merge, Flux or ArgoCD sees the updated

image-pin.ymland rolls out the exact image CI scanned.

Can Claude Just Automate This For You?

The model side is easy. Claude Code with Opus 4.7 or Codex with GPT-5.5 will generate a working override in a few minutes. Pointing the agent at a repo is only half the battle. Knowing what you actually want and recognizing when the model is confidently wrong is the hard part. On a complex app where you don't know how the code is structured, the agent will gladly hand you a beautifully scanned, fully reproducible disaster. For most overrides, however, it is genuinely easy.

You must read what the agent shipped, understand it, and sign off on it. Skimming is not enough. The agent doesn't know if a CVE is exploitable from your topology, nor does it know your waiver policy. It will not push back on a 400-line diff on your behalf. That part stays human.

Save the scaffolding time and spend it on review. You get a five-minute setup and nightly automation, but judgment remains in human hands.

Distroless Migration in Practice: Gokapi v2.2.4 Audit

Baseline Trivy Scan: 8 HIGH CVEs in Upstream Gokapi v2.2.4

Run Trivy against the upstream Gokapi v2.2.4 image using trivy image forceu/gokapi:v2.2.4. You get 8 HIGH findings sitting in the binary you were about to deploy. Most teams never scan and never know.

Rebuild with our override using a distroless/static:nonroot runtime and a Go 1.26.2 build stage, then rescan.

The result: Only 1 HIGH left.

Seven of the eight findings are gone. We used the same upstream code and release tag without Gokapi-side patches. We simply applied a different base layer and a fresher toolchain. The vulnerabilities that disappeared were never about Gokapi; they were about what Gokapi was being shipped on top of.

The single HIGH finding that remained sat in a code path our deployment doesn't exercise. We assessed it, filed a documented waiver, and shipped. The pipeline surfaced the finding so a human could make the call. The rationale is on record. This is exactly how the gate is supposed to work when blocking forever isn't an option.

go-jose/v4. It's a Go JOSE DoS via crafted input with a fix in v4.1.4, while we were at v4.1.3 transitively. The build blocked, the image was not pushed, and the tracker received structured findings with cve, blocked, and security labels. Trivy scans the built binary rather than go.mod, so the report covers what actually ships after the linker has trimmed unused paths. From here, a human decides to wait, fix, or waive. We waived.Now look at why.

After the Distroless Override: 7 of 8 CVEs Resolved

Gokapi's upstream Dockerfile uses a clean Go-Alpine build stage followed by a runtime layer of FROM alpine:3.19. It includes su-exec, tini, ca-certificates, curl, tzdata, and a shell entrypoint script. Our override swaps the runtime stage for gcr.io/distroless/static:nonroot:

FROM golang:1.26.2-alpine AS build

WORKDIR /src

RUN apk add --no-cache git

COPY . .

RUN go generate ./... && \

CGO_ENABLED=0 go build -trimpath -ldflags="-s -w" \

-o /out/gokapi github.com/forceu/gokapi/cmd/gokapi

FROM gcr.io/distroless/static:nonroot

COPY --from=build /out/gokapi /gokapi

USER nonroot:nonroot

EXPOSE 53842

ENTRYPOINT ["/gokapi"]

Several elements vanish on purpose. su-exec and tini act as workarounds for raw docker run. Kubernetes handles signal forwarding and privilege-dropping natively, and Go's standard library handles SIGTERM cleanly on its own. The upstream entrypoint script mostly switches UIDs at container start. Since distroless's :nonroot variant runs as UID 65532 out of the box, the script's job is already done. curl exists for raw-Docker health checks, whereas Kubernetes probes hit HTTP endpoints directly.

Distroless is Google's minimal-base-images project, licensed under Apache-2.0 since 2017. The static:nonroot variant is around 2 MB. It includes CA certificates, /etc/passwd, and tzdata, but omits a shell, package manager, wget, and sh.

How the Override Eliminates CVEs: Mechanics in Detail

Where the seven went. Six split cleanly by mechanism. Two were in Alpine-layer packages (musl, musl-utils) and vanished with the base swap. Four were Go stdlib CVEs, gone because the stdlib is linked into the binary and the toolchain bump rebuilt them. The seventh was in the application layer and cleared upstream between our pre- and post-override scans. The single HIGH that remained also sat in the application layer within a code path our deployment doesn't use. We waived it, documented the call, and shipped. The override clears the base-image backlog and the toolchain backlog in one pass. The application-layer backlog stays a manual judgment.

Those two Alpine findings represent a lower bound. The v2.2.4 release Docker Hub currently serves pins FROM alpine:3.19, which has been EOL since late 2025. Trivy flags this mid-scan, noting that the OS version is no longer supported and vulnerability detection may be insufficient. Backported fixes don't exist for that layer anymore. Upstream master is already on alpine:3.23 without a release yet. The gap between a CVE landing, an EOL kicking in, and a release catching up leaves users blind. The override cuts the dependency so we pick our own base on our own cadence.

A subtlety on the build stage. You might assume the CVEs in golang:1.26.2-alpine don't matter since it gets discarded after COPY --from=build. That is mostly true, with one exception: the Go toolchain links the standard library into the resulting binary. That is how the four Go stdlib CVEs vanished. We simply rebuilt on a newer Go. The builder Go release acts as a runtime-security lever rather than just builder hygiene. Renovate tracks new Go releases automatically, turning every stdlib patch into a reviewable bump PR.

Pin both FROM lines to digests. The example uses tags for readability, but production overrides should use strict SHA256 digests. Tags are mutable. If Google repoints distroless/static:nonroot overnight for a CA-certificate refresh, a tag-based build picks it up silently. A digest-pinned build stays put until you review the change. Renovate with pinDigests: true handles this by pinning on the first PR it opens and filing a new reviewable PR every time a digest changes.

Why distroless and not Docker Hardened Images? DHI is a solid product, but it sits inside an evolving vendor program with shifting free-tier terms. Distroless is Apache-2.0, Google-maintained, and stable. If you want to avoid vendor lock-in, distroless is the consistent choice. Chainguard represents the commercial tier above both and makes sense when your compliance regime explicitly demands vendor support.

The catch: distroless has no shell. kubectl exec -it pod -- sh doesn't work. For Kubernetes debugging, you need to use kubectl debug with an ephemeral container or maintain a :debug variant for non-production. This is a cultural shift, though not a difficult one.

The override is a one-time job per image, though the effort varies depending on what upstream ships. Gokapi sits at the higher end because its shell entrypoint, su-exec, and tini need translating into orchestrator-level concerns. Budget 30 to 60 minutes for something like that. Upstreams that ship a clean USER nonroot directive and a direct binary entrypoint are closer to a 10-minute swap.

The Audit Trail: SBOMs, SARIF Reports, and Diff Artifacts

For any upstream bump in the last 90 days, you get:

- Diff-review artifact: Commits landed, files changed, reviewer name from the merge commit, and timestamp.

- Trivy scanner report: SARIF in the Security tab, timestamped on every build.

- CycloneDX SBOM: Every package and version exactly as shipped. This is what DORA, SOC 2, and ISO 27001 ask for by name.

- Digest-pin PR history: Every production image rollout goes through a reviewed and merged PR with linked scan evidence.

- Documented base-image choice:

dockerfile_source: overrideon every pinned build, reviewable in a PR. - Blocked builds with auto-filed issues: Idempotent tracking when a CVE hits a version before it reaches production.

This gives you a documented supply-chain control with artifacts that speak for themselves. It isn't as polished as Chainguard, but it is polished enough to pass an audit.

This pipeline does not replace Dependabot on your own code. Dependabot lives upstream and scans the repos you write. This pipeline provides the missing piece for the third-party OSS container layer where you don't own the source.

When This Pipeline Isn't Worth Building

There are scenarios where a different approach wins. Large, well-audited upstreams like official Postgres, Nginx, or Redis offer no marginal value from building yourself. Pin the digest, proxy through a cache, and move on. Highly regulated workloads that require minimal-by-construction SBOMs and vendor support should pay for Chainguard or DHI. Finally, upstreams that release more than weekly break the human-in-the-loop review model.

For everything else—like a handful of OSS services you self-host for data sovereignty—the template works.

A quick note on licenses. Mirror images inherit upstream's license. Gokapi is AGPL-3.0, so what we publish to GHCR is AGPL-3.0. This is separate from this template's Apache-2.0 license. For self-hosted operators, AGPL §13 requires that network users can reach the source, which is trivially the upstream repo. Read upstream's LICENSE before publishing mirrored images to a public registry.

Template (fork for your own pipeline): https://github.com/THEKROLL-LTD/oss-mirror-build

Live example (our Gokapi mirror, nightly builds, audit bundles retained 90 days): https://github.com/THEKROLL-LTD/mirror-gokapi

PRs with Dockerfile overrides for other upstreams are welcome. The live mirror has no SLA. If you need production-grade assurance, fork the template.

If your team is stuck between shipping unsafe Docker Hub images and paying for Chainguard, talk to us. We built the override, and we fixed the parts that broke.